10 Tips for a Strong Data Infrastructure

11 minutes

13

At the start of your data infrastructure strategy, you probably are setting out with nothing more than “getting insights from my data.” But mapping this to specific actions and a set of technologies is much more complicated than stating your overall goal. In this article, we hope to provide some help navigating the steps as you set out to build a data infrastructure.

Defining Data Infrastructure

Data infrastructure is made of various components that enable data consumption, storage, and sharing. It creates the foundation that a business needs to handle its data, as well as the rules and practices to create, manage, use, and secure it.

It’s important for organizations to build infrastructures that make sense for them. They can be as simple or as complex as their specific needs entail. As you start working with the increased amount and complexity of data, your data infrastructure will become more complicated, too. But even then, it should serve its most critical goal—ensuring that users can work with data easily.

To meet this goal, an organization must establish a solid data infrastructure strategy that:

- Supports data flows

- Ensures the right data gets to the right users

- Minimizes data redundancy while achieving high data reliability

- Maximizes data profit (examples of data monetization: Data as a Service, Insight as a Service, cross-selling)

- Helps transform information into insight

Components of Data Infrastructures

Before moving on to recommendations on how to establish data infrastructures, let’s quickly cover what comprises such systems.

Specific elements that make up a data infrastructure differ from organization to organization. But most environments that create and support data fall into several common categories:

- Physical infrastructure (hardware, cabling, servers)

- Data center facilities (power, rack space, network connectivity)

- Information infrastructure (data assets, repositories, virtualization systems, cloud resources)

- Business infrastructure (BI systems, analysis tools)

How to Build a Robust Data Infrastructure

These are roughly the steps you could follow to ensure your data infrastructure supports your analytics needs.

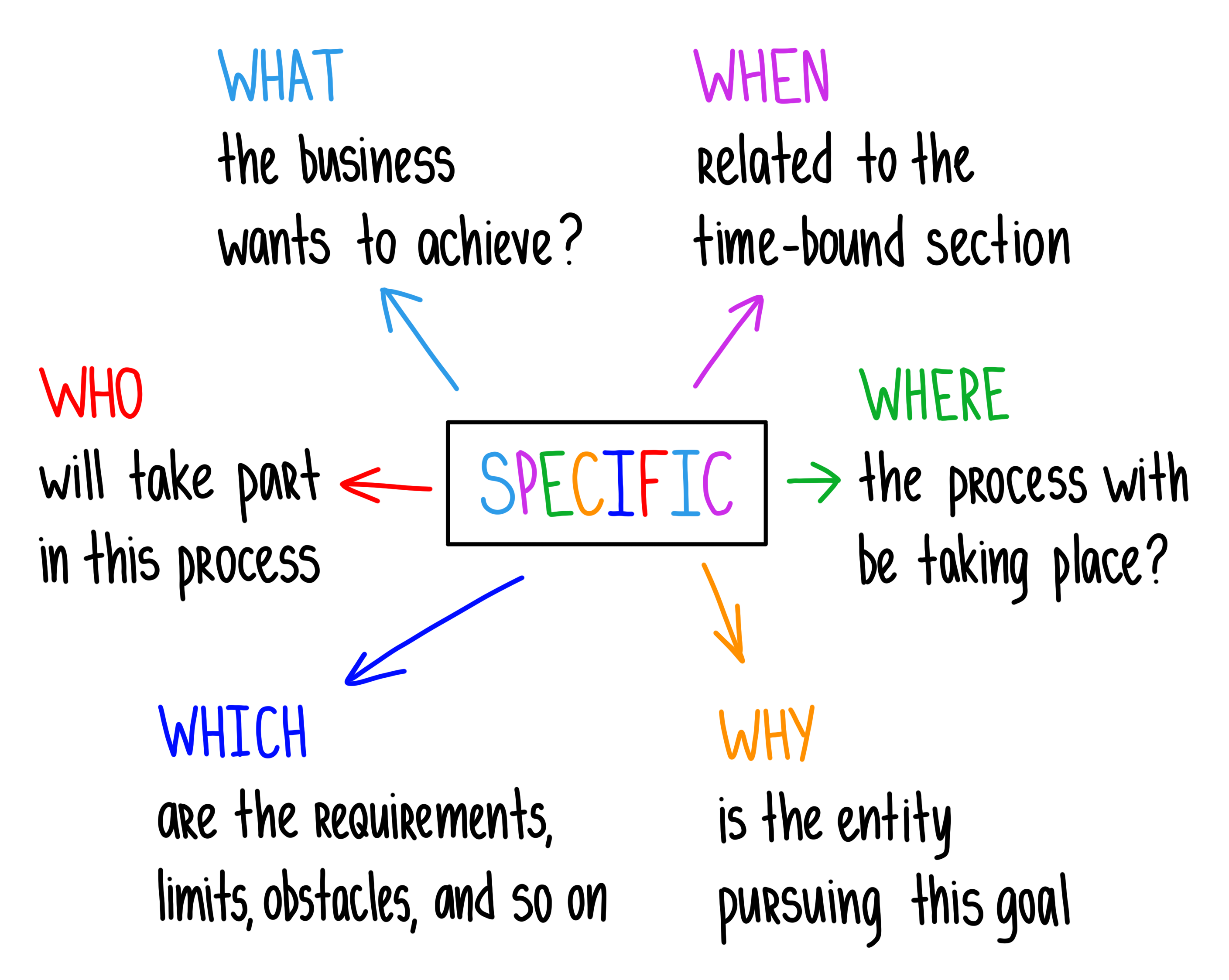

1. Define Your Data Goals

Your data goals can’t be an afterthought because they’ll guide every decision you make along the way. That starts with any decision regarding your data infrastructure and goes into other areas as well. Basically, you want to build a digital infrastructure that aligns your long-term business plan and also supports your short- to mid-term strategies.

Perhaps you want to identify new marketing channels or scrap low-performing ones. You may want to improve the customer experience or optimize your internal processes. Depending on the goal, you’ll be looking at different datasets or using different tools.

If you’re unsure where to start, consider creating a goal hierarchy and work your way down.

2. Structure and Clean Data

Avoid errors in data collection and processing at all costs—these can skew your analytics results and affect numerous departments within your organization.

Data infrastructure hygiene includes the following practices:

- Removing pointless data

- Setting up proper governance as your data comes in

- Updating data as frequently as possible, ideally in real-time

- Following retention schedules

- Automating your data cleansing systems

After you eliminate the root causes of error, you should still regularly measure the quality levels of your data, review your sources, etc. But it should be much easier to maintain compared to the initial cleaning.

3. Choose Flexible Tools and Build Flexible Models

It’s rarely the case that you’ll use a single tool for all your data needs. So, as you introduce your set of data management tools into your operations, be diligent in what you choose. It’s important to be reasonable about the number of tools at your disposal. Ideally, you want the tools that can carry out a wide array of functions—data storage and analysis, distribution and synchronization, etc.

As for data models, the structures your data lives in, they should also respond to your changing needs. It means building models on a small scale and adapting them over time.

4. Choose the Right Repository for Data Collection

The two most common choices here are a data lake or a data warehouse.

A data lake stores a vast pool of raw data for an undefined purpose. A data warehouse contains filtered, structured information for a specific purpose.

Some companies decide to explore a hybrid solution instead of choosing between either repository. Data with minimal business meaning can go into a lake, while useful and relevant data can be stored in a warehouse. Bear in mind that these use different technologies, so your data infrastructure should account for contradictions.

5. Create an ETL Pipeline

When you transport data within the organization from a database to an analytics platform, ETL is the way to go. Extract, Transform, and Load is an automated process that extracts information from raw data, transforms it into a different format, and loads it to a data repository. In other words, it makes data readily available to analysts and decision-makers.

You can also go for ELT (Extract, Load, Transform), which, in contrast, does not require data transformations before the loading process. With the process, raw data is loaded directly into a target data warehouse, often a scalable cloud-based data warehouse.

A well-engineered ETL/ELT pipeline is one of the core technologies that create a powerful data infrastructure. The improved data utilization, returns on your data, and mobility that come with it are now indispensable for a competitive data-driven business.

6. Embrace New Technology While Remaining Legacy-Friendly

Organizations building a data infrastructure tend to fall into one of two camps. One clings to the technology they have, failing to upgrade or break their existing processes. The other tries to deploy as much next-gen technology as possible, wherever they can. This makes them unsuitable to support legacy data workloads.

With neither approach being optimal, you should find a happy medium in using legacy systems and the modern data stack.

If you have legacy data assets (information that resides on software or hardware that has become obsolete) or work with vendors who do, you don’t want to lose that data. At the same time, you don’t want to fall behind on advanced solutions for data analytics stack. So, look for the modern data stack tools that work directly with legacy data sources or integrate them with third-party services.

7. Take Data Security Measures Seriously

Source: Parachute

Protect your data infrastructure from potential cyber-attacks, leaks, and malware. For this, you need to focus on:

- Password security – Protect sensitive data assets with strong passwords.

- User permissions – Remove permissions for unauthorized users who no longer need access to certain data.

- Encryption – Encrypted data is useless for attackers.

- Patches – Continuously patch and upgrade the software that powers your system.

- Stress-tests – Run security scans and penetration tests for different components of the infrastructure.

- Backups – Restore critical data in the event of a crash or attack.

8. Outline Roles and Fill Them With the Right People

Whether you’re a startup or an established business with a core IT team, you’ll need different people to fit different roles in managing your data infrastructure. The roles can include developers, governance validators, experts, and business analysts.

Consider putting someone in the position to set and enforce standards for incoming data. This will ensure that data quality issues will be caught in time, saving time and money for other departments.

9. Encourage Collaboration

Building a strong data infrastructure is a huge undertaking, made possible only by a team effort. IT people and those on the customer-facing side should come together to support it. It takes more than one person to determine what data is important, what metrics to use for data analysis, how to create visualizations, what insights can be drawn from the results, and what decisions need to be made based on them.

Have your entire team ready for the infrastructure changes to avoid hiccups in the workflow:

- Use common language, compile a data glossary

- Offer both hard- and soft-skills training

- Encourage questions (“what is our data strategy?”, “what is the difference between predictive and prescriptive analytics?”, “how do I create visualizations from datasets?”, etc.)

- Personalize learning journeys

- Give opportunities to apply data skills

10. Cultivate and Sustain a Learning Culture

As a developing field, data infrastructure constantly offers something new. And to forge a resilient workforce that adapts to the changing nature of data management, equip your workers with the necessary data skills.

Some will only need general knowledge and reasons behind the data collection. Others will need continuous upskilling. Since both share the same workplace, it’ll be helpful to make learning an organizational value.

Challenges for Building and Maintaining a Robust Data Infrastructure

Data is not perfect by default—there are many things that can go wrong even at the very first stages of constructing a data infrastructure. Even more obstacles will arise as the company scales up.

Effective data analytics processes have three significant barriers, which you should address and break down proactively.

Quality

High-quality data is the key variable in any data system. Notably, poor-quality data is likely to render any data infrastructure useless—at least until it’s fed better data.

Here are the characteristics that data assets must have to benefit an organization:

- Accurate

- Complete

- Consistent

- Timely

- Valid

- Unique

These requirements are true for new data, as well as data already ingested into an existing data infrastructure. That is why a data infrastructure solution should always involve data quality management.

Accessibility

The next challenge is ensuring that specific content is easy to find and retrieve. It doesn’t matter how organized or clean your information is if only developers can access it. When there is no regard for data accessibility—when data is siloed, improperly labeled, or misfiled—organizations get no value from it. It’d be like searching for a needle in the haystacks of databases.

So, if your goal is to make data-informed decisions quickly, you need to build an infrastructure that allows everyone in the company to access the data network they need.

Volume

The amount of data created each year has been increasing year by year. But the 33 zettabytes of data we’ve created by now is nothing when compared to the forecasts for the next decade or so.

Even in the short term, you should expect your data volume to grow and prepare for it. So, set a task for your data engineers—rebuild existing data infrastructure or build one from scratch with the emphasis on supporting the ever-growing flow of data.

3 Stages of Data Infrastructure

There is no one path to architect data infrastructure, but there are growth stages that many companies go through—from operating with little data to going big.

Stage 1: Having Small Data

If you have small amounts of business data (some suggest less than 5TB qualifies as such), build your digital infrastructure appropriately.

Save yourself operational headaches from maintaining data infrastructures you don’t need yet. If you need to build your own data pipelines, keep them extremely simple at first. Set up a machine to run your ETL script(s) and introduce a good BI tool to get your analytics off the ground.

Stage 2: Having Medium Amounts of Data

With more data floating around, e.g., more than a few terabytes, and third parties you’re gathering data from, your simple scripts might not be able to keep up.

At this point, you’ll need to start building a more scalable infrastructure. Prioritize tools that are simple to configure, have proper security/auditing, and support complex types of data (for the future). You’ll also want to introduce multiple stages in your ETL pipelines and build “real” data warehouses (or lakes).

Stage 3: Dealing With Big Data

With the right foundation, further growth should be smooth. Hardware will be the least of your problems; you’ll likely just need more of it. What will be more challenging is the expanding requirements for big data: near-real-time infrastructure, security and auditing, and compliance.

The good news is that with some exceptions, these tasks can be fulfilled by an amazing diversity of tools and services we have today. You don’t need to build infrastructure in-house or manage physical servers yourself.

The Bottom Line

Before you can benefit from your data and continually get perceptive insights, there needs to be an infrastructure in place. It means having all the components for collecting data, cleaning it, and making it accessible. And the list of practices outlined in this article will help you build a well-oiled machine for data analytics.

Consider the role of employees in your data infrastructure, too – all the practices for handling data should be clear, the right people should have the skills and resources to uncover information, and everyone should be on the same page about the business goals that your data should serve.

It’s also helpful to assess the performance of your data systems. Look for data acquisition problems, insufficient capabilities, bottlenecks, etc., and then make the necessary modifications to your data infrastructure to be an ever-evolving data-driven organization.

We convert raw data into meanigful insights for you to make the best decisions.

Featured Articles

-

Top 5 Data Visualization Tools in 2023

A simplified representation of complex data is key for any data-driven business. Raw data must be turned into a cohesively formatted visual that is…

Read more -

SnowFlake: The Best Data Warehousing and Prescriptive Analytics Solution

Data Warehouse as a Service, or DWaaS, has gained much popularity in the past decade. It is a service primarily provided by Snowflake Inc,…

Read more -

Simple Mobile Analytical Stack: Firebase + BigQuery

Developing reliable and high-quality mobile and web applications requires a lot of dedication and, more importantly, a powerful and feature-rich development platform. Firebase, provided…

Read more